Did we nearly have a 50 year head start on some of Big Tech’s stickiest ethical dilemmas? Say hello to the “What If” men of The Simulmatics Corporation. As so experly illuminated in Jill Lepore’s book IF/THEN: How The Simulmatics Corporation Invented the Future, they aimed for a radical idea: Use computers to predict and influence people’s behavior. Today we call it business as usual. But as issues of algorithmic bias, misinformation and privacy protection continue to raise red flags about the future of predictive algorithms and artificial intelligence, what clues can we find in the past?

Scott is then joined by Nabiha Syed, President of The Markup, an investigative journalism startup exploring how powerful actors use technology to reshape society. Listen in for a fascinating conversation about algorithmic harm, the many possible roads to effective regulation, and why progress starts with the willingness to get things wrong.

Additional Resources:

The Algorithmic Justice League

Look Both Ways E8: Community Memory & The Decentralized Web

Episode Transcript

Algorithms Harming People

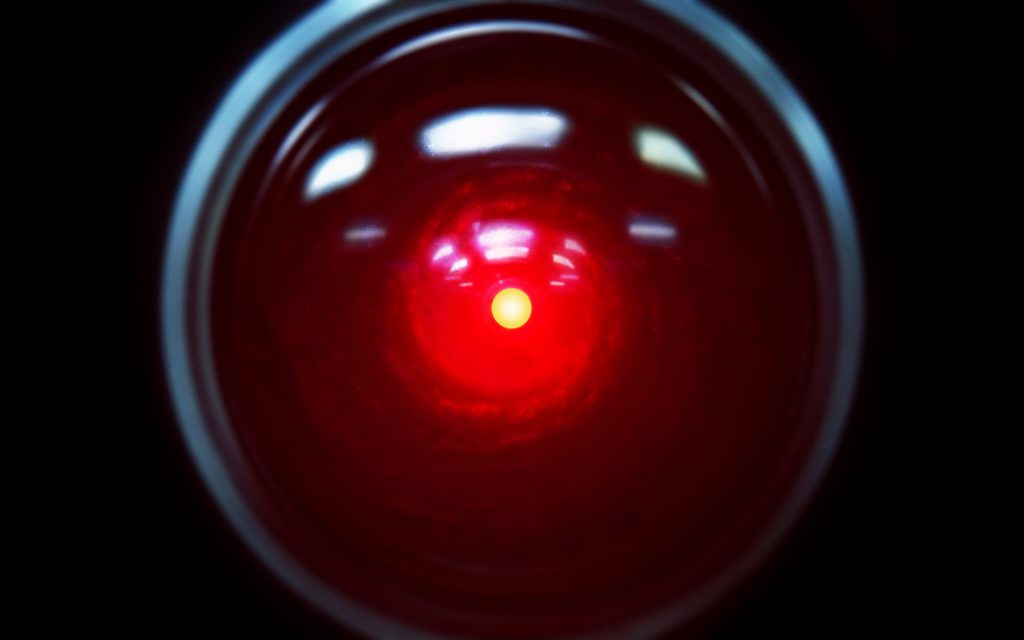

Computers advancing far enough to harm their human creators is a well that Hollywood will never stop coming back to. Meanwhile, in the real world…Artificial intelligence harming people has become anything but fictional. The shape of that harm is just less obvious than a fleet of power hungry robots destroying cities while conveniently explaining their plan along the way.

Algorithms used in policing and legal sentencing have led to wrongful convictions, bias and prejudice. Algorithms at the heart of social media giants like Twitter, Facebook, Instagram and YouTube can stoke division and spread misinformation. In 2016, Cambridge Analytica demonstrated, with terrifying precision, how elections can be influenced using the same underlying technology that recommends baby bottles to new parents, espresso machines to coffee lovers or the Sweet Valley High series to me.

Cases of algorithmic harm are well documented. The efforts to introduce accountability into the equation have been substantial, but still change has been hard to come by. Which begs the question, perhaps best articulated by the unforgettable, emotionally charged performance of Keanu Reeves as Neo in the Matrix:

(Keanu Clip) “Why?”

“Why?” is right Keanu. Let’s get into it.

Hello everyone I’m Scott Hermes, welcome to Look Both Ways: a podcast about what the experiments of the past can still teach us about today’s most pressing problems. The show is made possible by Kin + Carta, a digital transformation consultancy who exists to build a world that works better for everyone.

On this week’s episode: We’ll talk with Nabiha Syed, President of The Markup, a non-profit newsroom doing extraordinary work to answer big, complicated questions about Big Tech. Questions like:

How transparent should companies be about how consumer data is being used? When do algorithms blur the line between targeted advertising and behavior manipulation? And how can we protect people from algorithmic harm, without hindering our ability to use AI for good?

These questions often feel new. Like they’re obstacles we couldn’t have anticipated simply because we’re in territory we’ve never explored. But what if that wasn’t exactly true?

The People Machine

On a beach in Long Island in 1961, inside a large, strange-looking honeycombed dome, armed with not much more than a mess of chalkboards, manual computer punch cards, and some potentially dangerous ideas, a group of men from the Simulmatics Corporation raised those same questions.

They even answered some of them. From buying a box of cereal to casting a vote and everything in between, they believed human behavior could be predicted using computers.

They aimed to build the systems and methods necessary to prove it. They called it the People Machine.

Thanks to Jill Lepore’s (Le-pour) 2020 book IF/ THEN, How the Simulmatics Corporation Invented the Future, we’re now able to better understand who they were, what they hoped to accomplish, what we can learn from their demise, and why we nearly had a 50 year head start on solving some of the biggest ethical dilemmas of our time.

As Lepore writes in, IF /THEN: “They believed they had invented the “atom bomb” of the social sciences. What they could have not predicted was that it would take decades to detonate. And detonate it did.”

So as preface to our conversation with Nabiha Syed, we’re going to do our best to bring you that story. The story of Simulmatics has a sort of Forrest Gump quality to it. They somehow show up in some of the most critical moments of the 20th century. And it will be narrated, by yours truly, from the seat of a park bench. But without any box of chocolates, sadly.

Starting on November 4, 1952 and the 42nd United States Presidential Election.

Predicting an Election

Election Day newsrooms have always radiated a particular type of frenzied, dramatic energy. The Election of 1952, between Democrat Adlai Stevenson and Republican Dwight Eisenhower was the first that invited Americans inside to witness the action first hand.

But TV wasn’t the only new character being introduced to the election night stage.

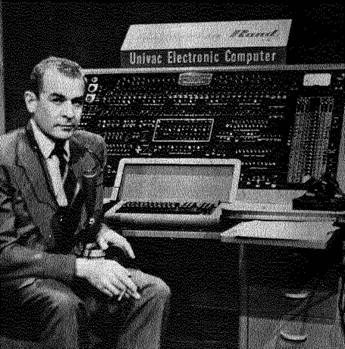

That’s legendary CBS newsman Chris Collingwood. The UNIVAC was the Universal Automatic Computer, originally built by Remington Rand for the US Census Bureau. It was roughly as tall as an upright piano, weighed a cool 16,000 pounds, and was blanketed with the blinking lights, buttons and switches of an airplane cockpit. For many Americans watching at home, the UNIVAC was their first glimpse of a computer in action.

Only it wasn’t a computer they were seeing.

It was a prop – a fake switchboard designed to LOOK like a UNIVAC machine.

CBS really was using a UNIVAC to predict the outcome of the election. But because it was too heavy to move, the machine had to stay in Philadelphia.

Instead, the UNIVAC’s predictions were relayed via phone to the CBS newsroom in New York.

The race was expected to be close. Polls gave Stevenson and Eisenhower each about a 50-50 chance. So when the UNIVAC predicted Eisenhower would defeat Stevenson in a landslide, CBS actually chose not to share the prediction with the public. Surely, it wasn’t accurate, they thought.

Eisenhower won by the largest margin in US election history. Here’s Collingwood coming clean to viewers later on.

<clip of Chris Collingwood>

What else could computers predict?

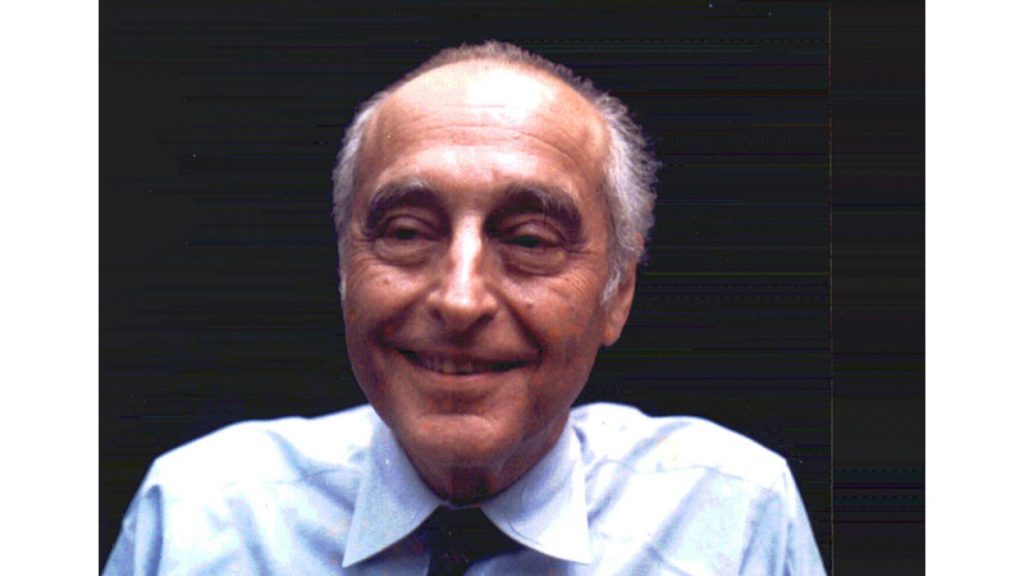

Watching the 1952 election coverage along with the rest of the country was a man named Ed Greenfield. A loud, idealistic man with the smile of a politician and the charm of a huckster, Ed worked in the booming advertising industry.

His ad firm, Greenfield & Co, had worked on the Stevenson campaign. So the loss left him disappointed. But that noisy, strange, mysterious, so-called “electronic brain” gave him an idea.

What else could computers predict? If computers could forecast the results of an election as votes are coming in, could they predict how people would vote long before they head to the polls? And if so, could different scenarios be tested to evaluate the likely outcomes of different strategies on the campaign trail?

If you’re thinking “Isn’t that how literally every political candidate now operates?” The answer is: Yes, Kent. But at the time, it might as well have been the stuff of science fiction.

As Lepore writes in IF/THEN: “Ed Greenfield collected people the way other men collect comic books or old stamps or vintage cars.” He knew everyone. And he thought he had a hell of an idea.

So he put together a team.

Greenfield assembled his best squad of computer engineers, behavioral scientists and mathematicians to see if it would work. Most notably:

Ithiel de Sola Pool was a professor at MIT, and a leading expert in behavioral science. In 1956, Pool had done work for Ed Greenfield’s company when consulting for Adlai Stevenson. In 1959, he became the other founding partner of Simulmatics Corporation. He’d later be called both a prophet of technology and a war criminal. But more on that later.

Fun fact for my fellow computer nerds: One of Greenfield’s other top recruits was this fella:

Alex Bernstein carved out a slice of computing history and the development of what we now call artificial intelligence when, in 1957, he taught an IBM 704 computer to play chess well enough to beat even highly skilled human opponents.

Project Macroscope

In 1959, the newly assembled Simulmatics Corporation moved into a modest office on Madison Avenue in New York just a short walk from IBM’s Global Headquarters.

The plan was to use election returns and opinion surveys to divide the American electorate into 480 distinct “voter categories”; not unlike the type of “segmentation” we’re used to hearing about today. Working class moms in suburbs of southern cities, college educated African American men in the north, fiscally conservative Lumbersexuals of the northwest…

Using rented IBM 704 computers, the machines would be crammed with data about previous elections and voter opinion surveys, and then encoded with IF/THEN statements – one of the basic principles of Fortran, the programming language used to build the machines that were capable of defeating chess masters.

In Lepore’s words: “you could ask it any question about the kind of move a candidate might make, and it would be able to tell you how voters, down to the tiniest segment of the electorate, would respond.”

This was the People Machine. Ed Greenfield, never one to miss a branding opportunity, called the plan “Project Macroscope.”

Fearing the future of predictive algorithms

In 1959, Greenfield sent the proposal for Project Macroscope to Newton Minnow. Minnow was one of Stevenson’s closest advisors. (and the man who once referred to TV a “vast wasteland”)

(as if the inner thoughts of Minnow)

Reducing people to punch cards and data points…”simulating” the behavior of real American voters without their knowledge, letting a machine influence the decisions of people seeking the country’s highest office….Minnow was horrified.

Minnow sent it to Arthur Schlesinger, Pulitzer prize winning Harvard historian and also close advisor to Adlai Stevenson, seeking his advice. Fun fact, I roomed with Arthur Schlesigner’s nephew when I went to NYU.

Attached to the Project Macroscope proposal, he included his own note reading:

“Without prejudicing your own judgment, my opinion is that such a thing (a) cannot work, (b)is immoral, c – should be declared illegal.”

Schlesinger shared his initial anxieties, but stopped short there, adding “I do believe in science and don’t like to be a party to choking off new ideas.”

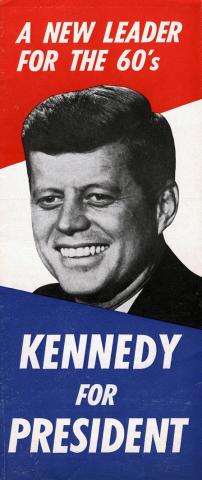

With seemingly nothing standing in their way, Simulmatics just needed a buyer. In a young man from Massachusetts that was quickly becoming the Democratic party’s rising star… they found one.

The 1960 Kennedy Campaign

At the time, the Kennedy campaign saw two major obstacles in their path to victory: Low support among black voters, and public concern over his Catholic faith. The Kennedy Campaign commissioned Simulmatics for 3 reports to help inform what to do next.

The technology was seemingly magic, but Ed Greenfield and Ithiel de Sola Pool were adept salesmen. They compared it to weather forecasts: Use information about what’s happened in the past to understand what’s likely to happen in the future. Seems reasonable enough, right?

One report concluded that because Kennedy had likely already lost anyone considered “anti-cathoilc,” he should take the religion issue head on. The report read:

“IF the campaign becomes embittered, THEN he will lose a few more reluctant Protestant votes to nixon but will gain Catholic and minority votes.” (119)

That’s Kennedy reading the report to himself. Outloud for some reason.

A record number of Americans, upwards of 67 million, go to the polls to elect the 35th president of the United States…. source: CriticalPast

…the unexpected delayed climax saw Kennedy the victor. At 43 years of age, he is the youngest man ever voted into the White House.

While it’s hard to identify the precise influence of these reports, the campaign’s decisions didn’t break with any of the recommendations made by Simulmatics. Regardless, in Lepore’s words: “They believe they had reinvented American politics”

Just weeks before Kennedy was inaugurated, the January issue of Harper’s Magazine published a bombshell story about a group of mysterious “What-if” men from a company called Simulmatics and their so-called “ People Machine” that had effectively won Kennedy the election.

If our now all-too-familiar spectrum of criticism is anchored between:

“Informed, intelligent questions” on one side and

“This is worse than Nazi Germany” on the other…responses to the Harper’s story covered the full gamut. Some warned of the dangers inherent to reducing voters to punch cards, and not acting before “clearing it with the people machine.”

Others argued the technology made “the tyrannies of Hitler, Stalin and the forebears look like the inept fumbling of a village bully.”

Ah, nuance.

Kennedy had yet to actually be sworn into office and already his victory was being called into question. Inside Simulmatics, many worried the negative press would be their undoing. Ed Greenfield, the AD man…had a different reaction…

It seems Greenfield was a “no press is bad press” kind of guy. As he saw it, they had made it to the big leagues.

Simulation as a Service

That summer, the men of Simulmatics brought their families along to frolic and play on the Beaches of Long Island, while they toiled away in their beachside dome from outer space.

The ability to predict, and even influence the future behavior of real people, by way of machine, was real…and available for purchase.

Government contracts poured in. Commissioned studies included sexy, made for hollywood topics like:

The simulation of automobile traffic for the Bureau of Public Schools and

The effect of rural agricultural practices on rural communication infrastructure…

Greenfield soon leveraged his contacts from his advertising days and met with just about every major American consumer products company.

If we could simulate how people will vote, why can’t we use it to understand what type of toothpaste people will buy? But as their ambitions grew bigger and bigger, so did the gap between Greenfield’s promises and his company’s ability to deliver.

The Unraveling

Ahead of the 1962 US midterm elections, Simulmatics landed a huge deal with the New York Times, pledging to help them lead the new frontier of data-led journalism. But the cracks formed early, and after a series of equipment delays, mechanical failures, unreliable results, and a bill from Greenfield for nearly 3x the initial estimate…one newspaper called the debacle the “Great Computer Hoax of 1962”.

Okay so this is the part of the episode where we quickly mention some of the remarkable details we had to keep on the cutting room floor.

A year after their debacle with the New York Times, President Kennedy is killed, leaving the country in a state of shock. A year later, Eugene Burdick, a scientist, acclaimed fiction author and friend / failed recruit of Greenfield’s, publishes a Novel about a Republican Presidential candidate who hires SIMULATION Enterprises to manipulate voters and defeat the then still incumbent President Kennedy. He titles the book The 480 after the voter categories created by Simulmatics in 1960. Again Ed Greenfield thinks it will be great for business, and again it churns up a new batch of PR issues.

Other details include:

- A mathematician known as “Wild Bill” McPhee sketching out the earliest Simulmatics IP from within Bellevue psychiatric hospital.

- Failed attempts to woo the 1968 Nixon Campaign

- Proposed “riot prediction” simulations

- scandalous summers at the company’s Long Island beachside Thunderdome office

- and lots more.

So, again – go check out Jill Lepore’s IF / THEN – it’s well worth the read.

But the Forest Gumping continues!

“They’re sending me to Vietnam…it’s this whole other country.”

It sure is, Forest. Next stop on the People Machine Express: War! What could go wrong?

Funded by the US Department of Defense under Robert Macnamara, Simulmatics arrived in 1965, occupying a fully functional office in Saigon.

First they focused on simulating outcomes on the battlefield. Then things got weirder. Led by Pool, “counterinsurgency” simulations under codename Project Camelot, aimed to predict where “communist revolutions” were likely to take place next. The program was also later targeted at Latin American countries like Venezuela and Colombia.

After ethical questions were raised about Project Camelot, most behavioral scientists pulled out of contracts with the Department of Defense. Pool did not. Although highly lucrative at first, the time spent by Simulmatics staff in Vietnam proved quite similar to the war itself: misinformed, highly costly, and ultimately fruitless.

Traveling back and forth from New York to Vietnam, Ed Greenfield was a mess…

He started drinking more, his wife left him…and as the anti-war movement gained momentum in the late 60s, which he largely agreed with, Ed Greenfield’s unraveling was only accelerated by the fact that his company was complicit in the fight.

As Jill Lepore writes in IF/THEN:

“He’d wanted to help liberal Democrats win elections and Ralston Purina sell dog food. He’d wanted to convince smokers to switch cigarette brands. Counterinsurgency? This wasn’t what he wanted to be doing.“

More clients pulled their contracts. In 1968, Ed Greenfield laid off his entire staff. All that remained were founders and chief stakeholders. They moved to sell the company. After Greenfield failed to enact a sale, bankruptcy proceedings started in 1970.

The dream was over. Everyone who had invested money into the company lost it.

For his involvement in the Vietnam war and the US Pentagon, Ithiel de Sola Pool was called a war criminal. For years, protests raged at MIT outside his office, until they marched to the listed headquarters of the Simulmatics Corporation only to find it empty and abandoned.

Greenfield continued his alcohol-fueled tailspin. He kept dreaming up companies and ideas, including the ambition to collect so much data, that he could pinpoint how people were feeling and then sell that information to other companies. He called it the Mood Corporation. And it sounds a lot like the core business model of Facebook and Twitter. His vision went unrealized.

Greenfield died of a heart attack in 1983.

Six decades after their founding, Jill Lepore writes about Simulmatics:

Hardly anyone, almost no one, remembers Simulmatics anymore. But beneath that honeycombed done, the scientists of this long-vanished American corporation helped build the machine in which humanity would by the twenty first century, find itself trapped and tormented: Stripped bare, driven to distraction, deprived of its senses, interrupted, exploited, directed, connected and disconnected, bought and sold, alienated and coerced, confused, misinformed, and even governed. They never meant to hurt anyone.

The National Data Center

Of all the IF / THEN scenarios to consider about Simulmatics, there’s perhaps one that provides the most compelling potential alternate outcome. The failed effort to create the National Data Center.

The Library of Congress stores books. The National Archives stores manuscripts and government records. As proposed by a group of social scientists and legal scholars in 1964, The National Data Center would store information.

The thinking was that soon computers would soon allow data to be collected, organized and analyzed in ways never before possible. Of the work cited to demonstrate the future influence of data science, Simulmatics’ work with the 1960 Kennedy Campaign was one of the most prominent.

At the time, the Johnson administration was introducing the “Great Society” programs focused on reducing poverty, improving civil and voting rights, environmental protections and increased aid to public schools. Because the government was intending to collect vast amounts of data to enact those programs, the idea was that some sort of infrastructure was needed to govern how that information could be used.

The Battle Between Privacy & Progress

The proposal was met with a flurry of privacy concerns, from both Congress and the public, and across both sides of the aisle.

The Wall Street Journal called the proposed National Data Center “an incipient octopus.”

The New York Times called it “an Orwellian nightmare.”

The ALCU agreed it was an overreach.

The idea of letting the government amass huge amounts of information on American citizens proved too big a pill to swallow. But as hearings were held that summer to debate the proposed plan, Paul Baran, a computer scientist from Rand said we were missing the bigger picture.

They were talking about data collection as if refusing to build this large government storage facility would prevent it from happening.

As Baran explained, this data is going to be collected whether you build a giant building called “The National Data Center” or not. Baran was one of a handful of people in 1964 who understood that the internet was coming. Soon, computers would all be connected to one another in a vast network of networks capable of collecting, aggregating and storing vast amounts of data, with or without the government.

The opportunity for debate presented by the The National Data Center, Baran argued, wasn’t in the center, but in the swamp of serious ethical questions that lay beneath it all. What is data? Who does information belong to? Can data be sold? Can it be exchanged and shared? How is it kept secure?

If these questions had just begun to percolate in 1966, they started boiling about a decade ago and now nobody can figure out how to turn off the heat.

The immediate function of the National Data Center would have been initially to dictate how the GOVERNMENT can handle information. But the argument is: it could have given us SOME set of standards for how data can and should be handled. In many ways it was certainly a flawed proposal and probably not the right solution at the time. But perhaps it WAS the 50 year head start we nearly had to start figuring out how to keep people safe from the dangers of algorithmic predictions run amok.

No center was built. No agency established. No standards set.

Here’s what Jill Lepore says in IF / Then:

“Exactly as Paul Baran predicted, a de facto national data center was established soon enough, by way of the linking of computers across federal agencies and, eventually across corporations, without any regulatory regime whatsoever. They had kicked the question down the road.”

An FDA for Algorithms

Okay so for those of you who are still with me on my Forrest Gumpian park bench, fast forward to 2022…

The argument for creating regulatory infrastructure related to data, artificial intelligence and its impact on society is simple: Democratic governments have a responsibility to protect their people from harm.

The FDA exists to oversee what we put in our bodies. Food and drugs. So for any packaged food product, any restaurant, medical or pharmaceutical product…The FDA approval and regulatory process is there to say: in order to sell people stuff they’re going to ingest…

you can’t just do whatever you want. Because if left unchecked, corners will get cut and people will get hurt. Which is exactly why the FDA was created.

Prior to the Pure Food and Drug Act of 1906, the closest thing in the US to the FDA was the Good Housekeeping Seal of Approval. As people learned more about the horrifying conditions in American meatpacking plants in cities like Chicago, it was clear that something needed to be done to ensure the safety of consumers.

Does the FDA create an impenetrable bottleneck to innovation? This week I’ve eaten tortillas made from egg whites, chicken nuggets made from black beans and a chocolate scone from my favorite local bakery that gave me a near out of body experience. While world hunger is far from solved, we seem to at least be doing a decent job of finding new ways to feed and care for ourselves.

The argument for SOME sort of oversight is that, just like the FDA, it would create necessary incentives to be more thorough, develop better ways of doing things, and instill confidence in the people that they are not being put in harm’s way.

I can go eat at a restaurant without being paranoid that there might be a rodent in my dinner because there is a separate legal body routinely ensuring conditions are maintained to PREVENT that from happening.

Shouldn’t everyone be able to use technology to apply for a job, communicate with friends, or watch a video, with full confidence that they’re not being inadvertently harmed or disadvantaged by algorithms working behind the scenes?

Recent Comments